前回はautomlのmljarを使って予測モデルを作成しました。

精度は作成したモデルの中で1番という結果になりました。

今回は違うautomlのAutoGluonを利用して結果がどうなるか確認してみようと思います。

MacでAutoMLの環境をする方法は下記記事にまとめています。pipでインストールしているのがほとんどですので、Linuxでも同じようなコードでインストールできるかも知れません。

※ brew install しているのは yum や apt に置き換える必要はあります。

(MLJAR) Pythonで3つのAutoML環境を用意してみた

(AutoGluon) Pythonで3つのAutoML環境を用意してみた

(auto-sklearn) Pythonで3つのAutoML環境を用意してみた

評価指標

タイタニックのデータセットは生存有無を正確に予測できた乗客の割合(Accuracy)を評価指標としています。

分析用データの準備

事前に欠損値処理や特徴量エンジニアリングを実施してデータをエクスポートしています。

本記事と同じ結果にするためには事前に下記記事を確認してデータを用意してください。

タイタニックのモデリング用データの作成まとめ

(その3-5) タイタニックのデータセットの変数選択にてモデリング用のデータを作成し、エクスポートするコードを記載していましたが分かりずらかったので簡略しまとめました。上から順に流していけばtitanic_train.csvとtitanic...

学習データと評価データの読み込み

import pandas as pd

import numpy as np

# タイタニックデータセットの学習用データと評価用データの読み込み

df_train = pd.read_csv("/Users/hinomaruc/Desktop/blog/dataset/titanic/titanic_train.csv")

df_eval = pd.read_csv("/Users/hinomaruc/Desktop/blog/dataset/titanic/titanic_eval.csv")概要確認

# 概要確認

df_train.info()Out[0]

RangeIndex: 891 entries, 0 to 890

Data columns (total 22 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 PassengerId 891 non-null int64

1 Survived 891 non-null int64

2 Pclass 891 non-null int64

3 Name 891 non-null object

4 Sex 891 non-null object

5 Age 891 non-null float64

6 SibSp 891 non-null int64

7 Parch 891 non-null int64

8 Ticket 891 non-null object

9 Fare 891 non-null float64

10 Cabin 204 non-null object

11 Embarked 891 non-null object

12 FamilyCnt 891 non-null int64

13 SameTicketCnt 891 non-null int64

14 Pclass_str_1 891 non-null float64

15 Pclass_str_2 891 non-null float64

16 Pclass_str_3 891 non-null float64

17 Sex_female 891 non-null float64

18 Sex_male 891 non-null float64

19 Embarked_C 891 non-null float64

20 Embarked_Q 891 non-null float64

21 Embarked_S 891 non-null float64

dtypes: float64(10), int64(7), object(5)

memory usage: 153.3+ KB

# 概要確認

df_eval.info()Out[0]

RangeIndex: 418 entries, 0 to 417

Data columns (total 21 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 PassengerId 418 non-null int64

1 Pclass 418 non-null int64

2 Name 418 non-null object

3 Sex 418 non-null object

4 Age 418 non-null float64

5 SibSp 418 non-null int64

6 Parch 418 non-null int64

7 Ticket 418 non-null object

8 Fare 418 non-null float64

9 Cabin 91 non-null object

10 Embarked 418 non-null object

11 Pclass_str_1 418 non-null float64

12 Pclass_str_2 418 non-null float64

13 Pclass_str_3 418 non-null float64

14 Sex_female 418 non-null float64

15 Sex_male 418 non-null float64

16 Embarked_C 418 non-null float64

17 Embarked_Q 418 non-null float64

18 Embarked_S 418 non-null float64

19 FamilyCnt 418 non-null int64

20 SameTicketCnt 418 non-null int64

dtypes: float64(10), int64(6), object(5)

memory usage: 68.7+ KB

# 描画設定

import seaborn as sns

from matplotlib import ticker

import matplotlib.pyplot as plt

sns.set_style("whitegrid")

from matplotlib import rcParams

rcParams['font.family'] = 'Hiragino Sans' # Macの場合

#rcParams['font.family'] = 'Meiryo' # Windowsの場合

#rcParams['font.family'] = 'VL PGothic' # Linuxの場合

rcParams['xtick.labelsize'] = 12 # x軸のラベルのフォントサイズ

rcParams['ytick.labelsize'] = 12 # y軸のラベルのフォントサイズ

rcParams['axes.labelsize'] = 18 # ラベルのフォントとサイズ

rcParams['figure.figsize'] = 18,8 # 画像サイズの変更(inch)モデリング用に学習用データを訓練データとテストデータに分割

# 訓練データとテストデータに分割する。

from sklearn.model_selection import train_test_split

x_train, x_test = train_test_split(df_train, test_size=0.20,random_state=100)

FEATURE_COLS=[

'Age'

, 'Fare'

, 'SameTicketCnt'

, 'Pclass_str_1'

, 'Pclass_str_3'

, 'Sex_female'

, 'Embarked_Q'

, 'Embarked_S'

, 'Survived'

]

X_train = x_train[FEATURE_COLS] # 説明変数 (train)

X_test = x_test[FEATURE_COLS] # 説明変数 (test)AutoGluon

モデル作成

from autogluon.tabular import TabularPredictor

predictor = TabularPredictor(label="Survived", problem_type="binary",path="RESULT_AUTOGLUON").fit(X_train, time_limit = 600)Out[0]

Warning: path already exists! This predictor may overwrite an existing predictor! path="RESULT_AUTOGLUON"

Beginning AutoGluon training ... Time limit = 600s

AutoGluon will save models to "RESULT_AUTOGLUON/"

AutoGluon Version: 0.4.2

Python Version: 3.8.13

Operating System: Darwin

Train Data Rows: 712

Train Data Columns: 8

Label Column: Survived

Preprocessing data ...

Selected class <--> label mapping: class 1 = 1, class 0 = 0

Using Feature Generators to preprocess the data ...

Fitting AutoMLPipelineFeatureGenerator...

Available Memory: 12834.43 MB

Train Data (Original) Memory Usage: 0.05 MB (0.0% of available memory)

Inferring data type of each feature based on column values. Set feature_metadata_in to manually specify special dtypes of the features.

Stage 1 Generators:

Fitting AsTypeFeatureGenerator...

Note: Converting 5 features to boolean dtype as they only contain 2 unique values.

Stage 2 Generators:

Fitting FillNaFeatureGenerator...

Stage 3 Generators:

Fitting IdentityFeatureGenerator...

Stage 4 Generators:

Fitting DropUniqueFeatureGenerator...

Types of features in original data (raw dtype, special dtypes):

('float', []) : 7 | ['Age', 'Fare', 'Pclass_str_1', 'Pclass_str_3', 'Sex_female', ...]

('int', []) : 1 | ['SameTicketCnt']

Types of features in processed data (raw dtype, special dtypes):

('float', []) : 2 | ['Age', 'Fare']

('int', []) : 1 | ['SameTicketCnt']

('int', ['bool']) : 5 | ['Pclass_str_1', 'Pclass_str_3', 'Sex_female', 'Embarked_Q', 'Embarked_S']

0.2s = Fit runtime

8 features in original data used to generate 8 features in processed data.

Train Data (Processed) Memory Usage: 0.02 MB (0.0% of available memory)

Data preprocessing and feature engineering runtime = 0.25s ...

AutoGluon will gauge predictive performance using evaluation metric: 'accuracy'

To change this, specify the eval_metric parameter of Predictor()

Automatically generating train/validation split with holdout_frac=0.2, Train Rows: 569, Val Rows: 143

Fitting 13 L1 models ...

Fitting model: KNeighborsUnif ... Training model for up to 599.75s of the 599.74s of remaining time.

0.7273 = Validation score (accuracy)

0.05s = Training runtime

0.05s = Validation runtime

Fitting model: KNeighborsDist ... Training model for up to 599.62s of the 599.62s of remaining time.

0.7483 = Validation score (accuracy)

0.02s = Training runtime

0.01s = Validation runtime

Fitting model: LightGBMXT ... Training model for up to 599.57s of the 599.57s of remaining time.

0.8042 = Validation score (accuracy)

2.45s = Training runtime

0.01s = Validation runtime

Fitting model: LightGBM ... Training model for up to 597.1s of the 597.09s of remaining time.

0.8252 = Validation score (accuracy)

0.49s = Training runtime

0.01s = Validation runtime

Fitting model: RandomForestGini ... Training model for up to 596.56s of the 596.55s of remaining time.

0.8112 = Validation score (accuracy)

1.04s = Training runtime

0.07s = Validation runtime

Fitting model: RandomForestEntr ... Training model for up to 595.4s of the 595.39s of remaining time.

0.8112 = Validation score (accuracy)

0.77s = Training runtime

0.07s = Validation runtime

Fitting model: CatBoost ... Training model for up to 594.5s of the 594.5s of remaining time.

0.8252 = Validation score (accuracy)

0.67s = Training runtime

0.0s = Validation runtime

Fitting model: ExtraTreesGini ... Training model for up to 593.81s of the 593.81s of remaining time.

0.8042 = Validation score (accuracy)

0.77s = Training runtime

0.07s = Validation runtime

Fitting model: ExtraTreesEntr ... Training model for up to 592.92s of the 592.91s of remaining time.

0.8112 = Validation score (accuracy)

0.77s = Training runtime

0.07s = Validation runtime

Fitting model: NeuralNetFastAI ... Training model for up to 592.03s of the 592.02s of remaining time.

No improvement since epoch 2: early stopping

0.8112 = Validation score (accuracy)

4.26s = Training runtime

0.02s = Validation runtime

Fitting model: XGBoost ... Training model for up to 587.73s of the 587.72s of remaining time.

0.8392 = Validation score (accuracy)

0.52s = Training runtime

0.01s = Validation runtime

Fitting model: NeuralNetTorch ... Training model for up to 587.19s of the 587.18s of remaining time.

0.8182 = Validation score (accuracy)

3.19s = Training runtime

0.02s = Validation runtime

Fitting model: LightGBMLarge ... Training model for up to 583.97s of the 583.96s of remaining time.

0.8462 = Validation score (accuracy)

0.88s = Training runtime

0.01s = Validation runtime

Fitting model: WeightedEnsemble_L2 ... Training model for up to 360.0s of the 582.25s of remaining time.

0.8671 = Validation score (accuracy)

0.76s = Training runtime

0.0s = Validation runtime

AutoGluon training complete, total runtime = 18.61s ... Best model: "WeightedEnsemble_L2"

TabularPredictor saved. To load, use: predictor = TabularPredictor.load("RESULT_AUTOGLUON/")

performance = predictor.evaluate(X_test)Out[0]

Evaluation: accuracy on test data: 0.8379888268156425

Evaluations on test data:

{

"accuracy": 0.8379888268156425,

"balanced_accuracy": 0.8252564102564103,

"mcc": 0.6652608586818662,

"roc_auc": 0.8799358974358975,

"f1": 0.7943262411347518,

"precision": 0.8484848484848485,

"recall": 0.7466666666666667

}

predictor.leaderboard(X_test, silent=True)Out[0]

model score_test score_val pred_time_test pred_time_val fit_time pred_time_test_marginal pred_time_val_marginal fit_time_marginal stack_level can_infer fit_order 0 ExtraTreesGini 0.860335 0.804196 0.101699 0.073818 0.773697 0.101699 0.073818 0.773697 1 True 8 1 ExtraTreesEntr 0.854749 0.811189 0.105370 0.073845 0.766306 0.105370 0.073845 0.766306 1 True 9 2 RandomForestGini 0.849162 0.811189 0.135036 0.074477 1.043453 0.135036 0.074477 1.043453 1 True 5 3 RandomForestEntr 0.843575 0.811189 0.158493 0.074012 0.774308 0.158493 0.074012 0.774308 1 True 6 4 WeightedEnsemble_L2 0.837989 0.867133 0.082278 0.054091 9.603240 0.006601 0.001283 0.756411 2 True 14 5 CatBoost 0.826816 0.825175 0.006342 0.001920 0.674038 0.006342 0.001920 0.674038 1 True 7 6 NeuralNetFastAI 0.821229 0.811189 0.028387 0.016736 4.264223 0.028387 0.016736 4.264223 1 True 10 7 XGBoost 0.810056 0.839161 0.009273 0.011013 0.516064 0.009273 0.011013 0.516064 1 True 11 8 LightGBM 0.804469 0.825175 0.011000 0.008876 0.489312 0.011000 0.008876 0.489312 1 True 4 9 LightGBMLarge 0.804469 0.846154 0.013757 0.008953 0.875323 0.013757 0.008953 0.875323 1 True 13 10 NeuralNetTorch 0.804469 0.818182 0.024260 0.016106 3.191219 0.024260 0.016106 3.191219 1 True 12 11 LightGBMXT 0.798883 0.804196 0.006092 0.006964 2.452708 0.006092 0.006964 2.452708 1 True 3 12 KNeighborsDist 0.692737 0.748252 0.009749 0.014040 0.021805 0.009749 0.014040 0.021805 1 True 2 13 KNeighborsUnif 0.631285 0.727273 0.006563 0.054864 0.047228 0.006563 0.054864 0.047228 1 True 1

精度確認

# Return the mean accuracy on the given data and labels.

from sklearn.metrics import accuracy_score

print("train",accuracy_score(X_train["Survived"], predictor.predict(X_train)))

print("test" ,accuracy_score(X_test["Survived"], predictor.predict(X_test)))Out[0]

train 0.9185393258426966

test 0.8379888268156425

# https://scikit-learn.org/stable/modules/generated/sklearn.metrics.ConfusionMatrixDisplay.html

import matplotlib.pyplot as plt

from sklearn.metrics import ConfusionMatrixDisplay

from sklearn.metrics import confusion_matrix

print(confusion_matrix(X_test["Survived"],predictor.predict(X_test)))Out[0]

[[94 10] [19 56]]

Kaggleへ予測データをアップロード

FEATURES=[

'Age'

, 'Fare'

, 'SameTicketCnt'

, 'Pclass_str_1'

, 'Pclass_str_3'

, 'Sex_female'

, 'Embarked_Q'

, 'Embarked_S'

]

df_eval["Survived"] = predictor.predict(df_eval[FEATURES])

df_eval[["PassengerId","Survived"]].to_csv("titanic_submission.csv",index=False)

!/Users/hinomaruc/Desktop/blog/my-venv/bin/kaggle competitions submit -c titanic -f titanic_submission.csv -m "model #010. autogluon"Out[0]

100%|████████████████████████████████████████| 2.77k/2.77k [00:05<00:00, 544B/s]

Successfully submitted to Titanic - Machine Learning from Disaster

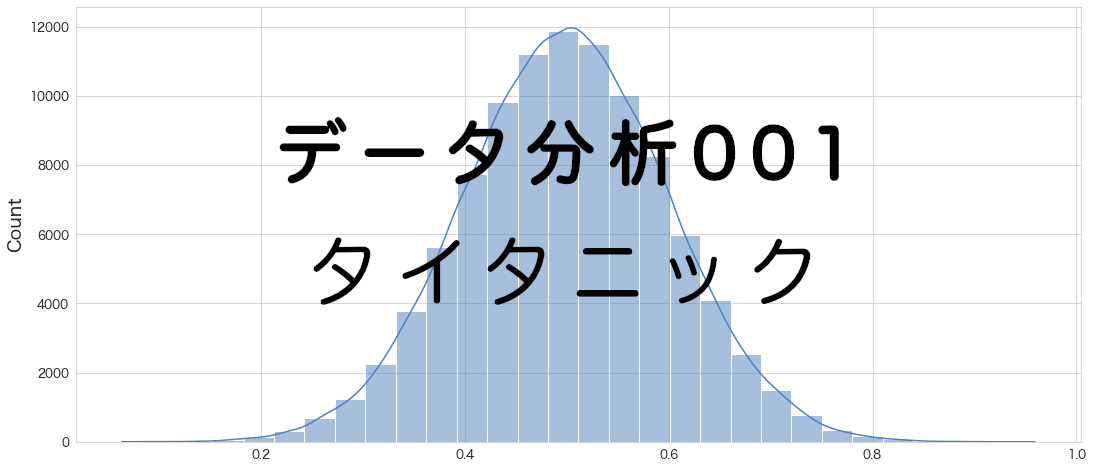

Kaggleでの精度確認の結果

0.77751

まとめ

AutoGluonの精度は0.77751でした。

これは暫定1位だった、mljarの結果(0.77511)を超えました。